. Photo: Andrea Danti – Fotolia.com

The access to RAM is to faster to a million times than conventional hard drives and even in data throughput figure is still almost a factor of 100. The prices for memory decline per year average of 30 percent. At the same time the processors for almost all architectures are becoming increasingly powerful. However, most access method in databases on the algorithmic state of the 80s and 90s: They focus on the most efficient possible interaction between the hard disk and RAM. But that’s still up to date?

SAP HANA

throw off this legacy, and of course curl around through technical progress the lucrative market of database management systems (DBMS), presented developer of the Hasso Plattner Institute and Stanford University, 2008, the first examples of their relational in-memory database for real-time analysis. First, ironically under the title “Hasso’s New Architecture”, it was created two years later, SAP HANA, which was “High Performance Analytic Appliance”

target the operational capability of a single platform, or even a single dataset -. For both the online application (online transaction processing OLTP =) and for analytical purposes (Online Analytical Processing = OLAP). The developers wanted to eliminate the previously hard and time-consuming separation between operational and business intelligence tasks, creating an extraordinary productivity advantage for using this appliance. Nevertheless HANA 2011 came “only” for the SAP Business Warehouse on the market. Since mid-2013, it was also for operational SAP modules available.

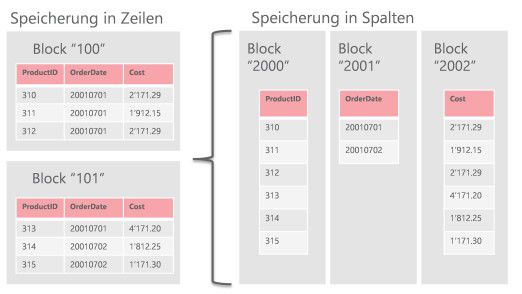

HANA is purposely designed to keep all the data in the main memory. The database uses its very intensive CPU caches, organizes the data column-oriented predominantly – instead of the current practice in rows. In addition, it compresses the data in RAM and on disk and parallelized data operations within the CPU cores in multicore systems and even across multiple computing nodes.

Photo: Trivadis AG

And suddenly turned to existing IT systems in question: Will analytical queries now a million times. faster and maybe even available online? Should we be separated from data warehouses and older DBMS us now? Is the projection built by SAP nor einholbar? The pressure on traditional database vendors such as IBM grew and soon they enriched with its own in-memory solutions to market. To this day, of course, no two approach the other.

IBM DB2 BLU Acceleration

In April 2013, the in-memory function package “BLU Acceleration” as part of the advanced editions of IBM DB2 database published. In principle here come the same techniques as in HANA used. However, IBM integrates them simply in your technique and allows the coexistence of conventional and optimized memory tables within the same database. These tables can be converted from one format to another, and should, according to IBM’s well optimizable queries by a factor of 8-40 accelerate. In addition, up to 90 percent space savings through compression both in RAM and on disk. Unlike HANA BLU Acceleration is today but still clearly focused on analytical workload. In productive use OLTP should therefore continue to be conducted on row-oriented tables

Microsoft SQL Server In-Memory Database Features

Microsoft SQL Server followed the trend. Even with the SQL Server 2012 release could be produced on special tables column-oriented compressed in-memory indexes for complex queries. This made it possible to speed up the analysis considerably. The indices are still created in addition to the normal table and must be created manually after each change – with the new SQL Server 2014, it is, however, upgradable

- encrypt_goodreader

Use the “Passcode Lock” alone is no real protection. Here are just mails and their attachments are securely protected. The apps GoodReader and Cortado for Apple’s encryption API, here a password is also entered. With GoodReader can encrypt all files … - encrypt_cortado

Cortado the user must select the sensitive data. Only then the protection function is active. - diskaid

Is passcode lock enabled and the protective function of the respective app, the data can not load via iTunes from iPhone , - phone view

Even tools like Disk Aid PhoneView and iFunbox refuse to download files using the “File Sharing” accessible. Here are a data thief has no chance to obtain sensitive data of iOS devices. It is important to choose a complex password for code lock. - crd

While the team from Passcode Lock and appropriate apps the user under normal conditions the data securely protects, we have tried to give us a back door access. We have booted the iPhone with activated passcode lock and secure files in GoodReader and Cortado a Custom Recovery RAM disk. - rd_mount

The procedure applies to the data recovery and forensics use and works on Mac and Windows PCs. Despite passcode lock we could see the user and the root partition of the iPhone 4 with iOS 4.3.3 boot after about two minutes, so have PC access to the data. - iphone_usb

find the files in question in GoodReader and Cortado even fast, they are in the User partition under “/ mobile / Applications”. Instead the program name here number combinations are used as a folder name, opened it give the program’s name and all files award. Our attempt to open files or copy them to the PC, the iPhone refused. Only non-encrypted in Cortado files could be transferred. The combination of code lock and appropriate apps resists the ultimate hack attempt. Apple’s new encryption system based on Keybags and is not cracked. All files that go under the protection of this system are safe from current hack attacks. All files stored in other apps, however, we experienced despite passcode lock without any problems and quickly copy. - sql

While Apple mails and attachments of their own apps as well as data from apps that use the API encryption, secure protection, can be on the Custom Recovery RAM disk heaps of data from other Apple apps copy or open. Despite Passcode Lock addresses, SMS, calendar, photos are the camera app, bookmarks, and so on unprotected. Our intrusion method is quite sophisticated, but Apple can – showing mail – automatically protect data. Here, have to be repaired quickly

In version 2014 came up with “in-memory OLTP” a new solution exclusively for the acceleration of transactions on operating systems such as ERPs or CRMs added. Tables of this type have to be stored completely in memory. They allow for transaction-intensive applications while significant performance gains. According to Microsoft, these are depending on the application at a factor of 100 or even higher. Again, of course, there are in-memory tables peacefully alongside conventional tables in the same database and can be combined in almost any way.

So Microsoft has two separate solutions for OLTP and analysis program.

Oracle Database In-memory option

In July 2014 finally moved also by Oracle and equipped its 12c database with a paid in-memory additional option. This consists essentially of an “in-memory column store” to speed up analytical queries, but partly also suitable for OLTP applications due to their design.

The Oracle database works much like IBM BLU Acceleration to achieve performance improvements by a factor 10 to 100 depending on the application. Unlike other solutions, the Oracle database but no in-memory data writes to disk. Column-oriented data management, automatic indexing, compression – all operations take place exclusively in main memory. All Disk relevant operators will be carried out using traditional means and redundant, but consistently pulled for the in-memory structures. This results in one hand, disadvantages due to redundant resource consumption and the lack of compression on disk

the other hand, is also a special advantage of this approach. “In Memory” is for Oracle only one switch, with the online tables or parts can be optimized in-memory tables. So there is no need to migrate data to benefit from new opportunities. This makes a test so very easy

similarities and differences

The thrust of the “Big Three” is clear. The change to a different, separate in-memory HANA platform as intended will no longer be necessary. In the simplest case, the administrator in existing databases Around need only one switch in order to speed up all applications many times. But is that really realistic? It quickly notice that use all the new in-memory databases similar mechanisms. These include the column-oriented data management, automatically generated and all storage-optimized data structures and intensive use of CPU features and compression of data in main memory and / or disk.

- SAP S / 4hana in detail

Bill McDermott, CEO of SAP, opened the presentation of SAP S /4HANA. - SAP S / 4hana in detail

SAP S / 4hana can shine with numerous new features. - SAP S / 4hana in detail

The new SAP Business Suite SAP HANA 4 in the Public Cloud Edition in detail. - SAP S / 4hana in detail

Managed Cloud Edition for SAP Business Suite SAP HANA 4 has been extended to these functions. - SAP S / 4hana in detail

The revisions to the On Premise Edition for SAP Business Suite SAP HANA 4. - SAP S / 4hana in detail

S / 4hana scores with its simple and reduced data structure. - SAP S / 4hana in Details

The developers of SAP HANA Database could reduce the footprint significantly in each version. - SAP S / 4hana in detail

S / 4hana was duly cleared out. The reduced complexity of the current SAP HANA version has improved compared to the previous version enormously in performance. - SAP S / 4hana in detail

Bernd Leukert, Chief Product Officer and innovation, demonstrated how SAP S / 4hana works in practice. - SAP S / 4hana in detail

SAP S / 4hana in practice. - SAP S / 4hana in detail

SAP S / 4hana reports a pump failures and restarts appropriate processes to resolve this error as soon as possible. - SAP S / 4hana in detail

Even with mobile devices, SAP S / 4 HANA deal. - SAP S / 4hana in detail

If necessary, the IT Responsible SAP S / 4 HANA can also be controlled from the wrist from.

But there are also noticeable differences in the individual solutions. First there is the question of limits on database size by the available memory. SAP HANA and the SQL Server OLTP solution to in-memory load data later than the first query completely into the main memory, while Oracle and IBM DB2 does not require this and thus simplifies working with large amounts of data. In addition, Oracle compressed data is not written in compressed form on storage media. You must be rebuilt after a restart of the database again. While this approach provides more flexibility in administration and a wider range of applications, but saves no space on disk and creates redundancies in the processing

And then there’s the kind of usable application types. Microsoft offers special, OLTP however, optimized table types, IBM table types for purely analytical workload. SAP HANA is different sometimes needed between row and column-oriented data management and supports both types of workload. Oracle placed his solution mainly for analytical field, but also promises improvements for OLTP applications because fewer indexes on tables are needed and thus the DML throughput to be improved.

What is in-memory approach the right

For companies are basically two questions:? What in-memory approach is right and what solution should I buy

Possible answers but can not be generalized but are subject to individual scenarios. Companies should in any case all the technical, organizational and cost-related peculiarities differentiated look – both for new development as well as an application migration. You should know that few use cases are provided at no additional adjustments – and they need to invest in appropriate services. In addition, the performance benefits will not be available for all applications and can even slow it down. In some cases you will have to live with functional limitations.

Before implementing an in-memory database, a thorough evaluation should take place both when moving to a new platform as well as a planned migration. Even in the case of a seemingly simple change to the in-memory technology within the familiar RDBMS, it is advisable to identify the suitable data and to check in detail all applications. This can be done either with their own teams or by an external service.

Is it worth the in-memory technology?

For IT decision makers established at the end of the question whether a switch to in-memory technology is worthwhile, even or especially if it is in some cases is only an upgrade of existing systems. The fact is that the solutions in specific application scenarios in both OLTP and in the analytical field much faster and work more efficiently than conventional techniques. However, only very distinct processes to the above-mentioned factors can accelerate – the average of the performance gain will be significantly lower

But let through the in-memory technology, reducing costs for hardware and licenses in many cases.. These cost reductions are, however, visible only after the reaction. For the transition to the new technology is almost always associated with application adjustments – which are included in the overall cost accounting

In-memory databases are a real asset in the database world.. But they are not suitable for every application and should be checked comprehensively with the proverbial binding to a new technology. (Bw)

Newsletter ‘Server + Storage’ now

No comments:

Post a Comment